AI agents do not need more "rules."

They need more enforcement.

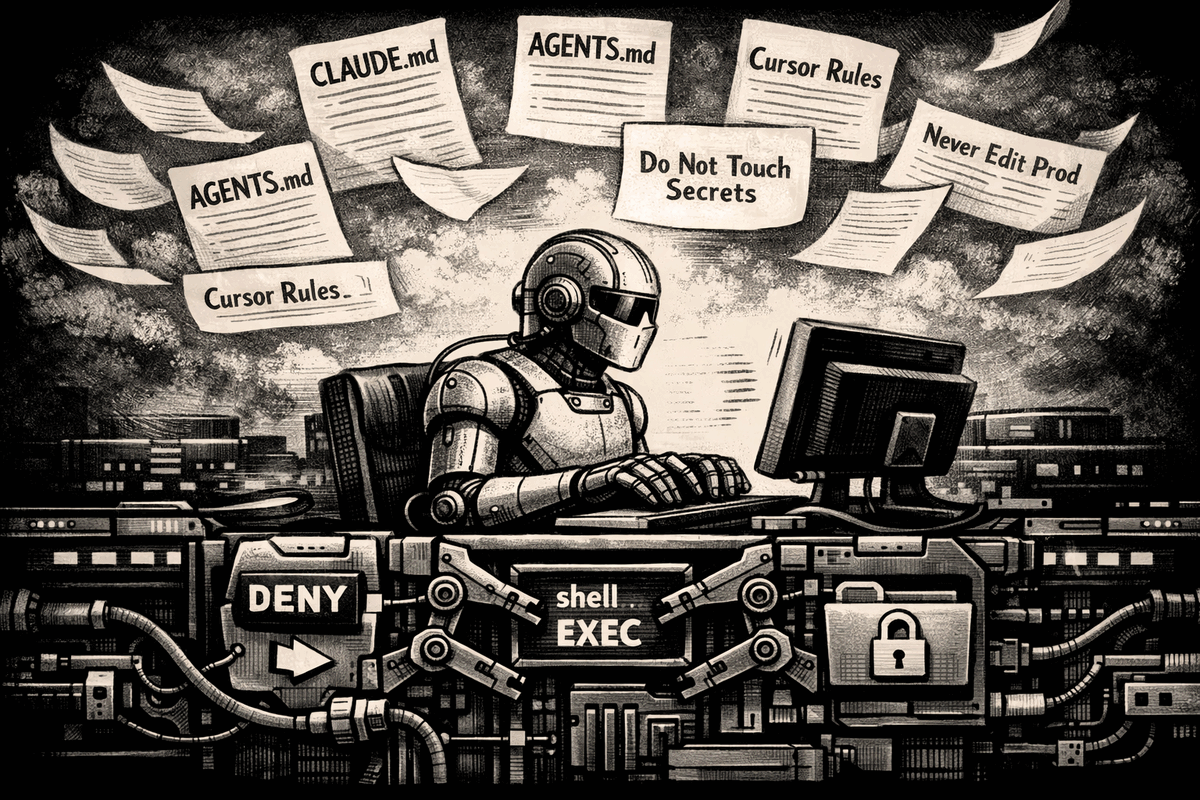

Files like CLAUDE.md, GEMINI.md, AGENTS.md, and Cursor rules are useful. They help an agent pick up project conventions, avoid known mistakes, and behave more consistently across a codebase.

But they are not really rules.

They are context.

And context is not the same thing as control.

That distinction gets lost because the files look like policy. They often contain lines like:

- never edit production config

- ask before using network tools

- do not touch secrets

- only modify files under this directory

In a real software system, those constraints are enforced by the system itself. A shell script either has permission to do something or it does not. A kernel either allows the action or blocks it. A sandbox either permits the syscall or returns an error.

A model does not enforce instructions that way.

A model treats those instructions as part of the prompt, weighs them against everything else in context, and then generates the next tokens probabilistically. The products make this visible in how they load and merge these files. Anthropic delivers CLAUDE.md content as a user message after the system prompt, not as part of the system prompt itself. OpenAI Codex reads AGENTS.md files and concatenates them into a combined instruction chain with a default size cap. Gemini CLI loads GEMINI.md files hierarchically and sends them to the model with every prompt. These are all context-loading mechanisms. Useful ones. But they are still prompt-level mechanisms, not enforcement.

That is why people keep having the same surprised reaction:

"I told the agent not to do that, and it did it anyway."

The problem is not always that the instruction was missing. Often the instruction was there, but the model did not retrieve it, prioritize it, or apply it strongly enough at the moment it mattered.

That is the real long-context problem. A larger context window means the model can fit more text into one session. It does not mean every important instruction remains equally salient inside that session.

Lost in the Middle (Liu et al., TACL 2024) showed that models use relevant information more reliably when it appears near the beginning or end of the input, and performance degrades significantly when it is buried in the middle, even for models explicitly trained on long contexts. Anthropic's own context engineering guidance reflects the same practical reality: as token count rises, models lose focus and recall degrades.

If your agent is juggling code, docs, logs, tool output, prior messages, and a long rules file, then a critical line buried in that pile becomes one more paragraph in the haystack. The needle is there. The model just does not always retrieve it when it matters.

That is why "we put it in the rules file" is not a security boundary.

It is guidance. Useful guidance, often necessary guidance. But still guidance.

The real boundary lives at the point where the agent turns intent into action.

That is the execution layer: opening files, spawning processes, invoking shells, calling tools, making network requests, touching credentials, or writing outside the expected workspace. That is the moment where a system can stop being polite and start being precise.

A prompt-side instruction says:

please don't do this

An execution-layer control says:

you cannot do this, or you must ask first, or this action is allowed only under policy and will be logged

That difference matters. The better agent products already reflect it.

Codex explicitly separates its instruction files from sandboxing and approval controls: sandbox mode defines what the agent can technically do, while approval policy defines when it must stop and ask. Gemini CLI likewise has a policy engine that evaluates allow, deny, and ask_user decisions for every tool call. Denied tools can be excluded from the model's awareness entirely. That is not a suggestion in a prompt. That is a tool the model never even sees.

These are the right instincts, but they are scoped to each vendor's own agent and runtime. They do not generalize across the custom agents, pipelines, and MCP tool chains teams are building today.

That gap between what the model was told and what the system will actually permit is the execution layer. AgentSH operates there.

AgentSH is not another instruction file stuffed into context, hoping to win attention against everything else in the prompt. It lives where agent decisions become real system activity: syscalls are intercepted, filesystem access is policy-checked, network connections are gated, and process execution is constrained before launch.

If you tell an agent in a markdown file "never read ~/.ssh," you are hoping the model remembers and obeys. If the filesystem layer blocks access to ~/.ssh, the model's memory no longer decides the outcome.

If you tell an agent "don't use the network," that is a request. If the runtime denies outbound connections, that is enforcement.

If you tell an agent "do not execute arbitrary shell commands," that is advice. If process execution is intercepted and checked against policy before launch, that is control.

So the point is not that rule files are bad. They are useful, and every serious agent stack should have them.

The point is that they are the wrong place to put guarantees.

As agents get more autonomy, more tools, and bigger context windows, it becomes more dangerous to confuse instruction with enforcement. The model may understand the rule. It may even intend to follow it. But if the action actually matters, intention is not enough.

Context can guide an agent. Only enforcement can constrain one.

← All postsBuilt by Canyon Road

We build Beacon and AgentSH to give security teams runtime control over AI tools and agents, whether supervised on endpoints or running unsupervised at scale. Policy enforced at the point of execution, not the prompt.

Contact Us →